KshemaGPT Part 2: How AI, Voice, and Multilingual Agents Empower Indian Farmers

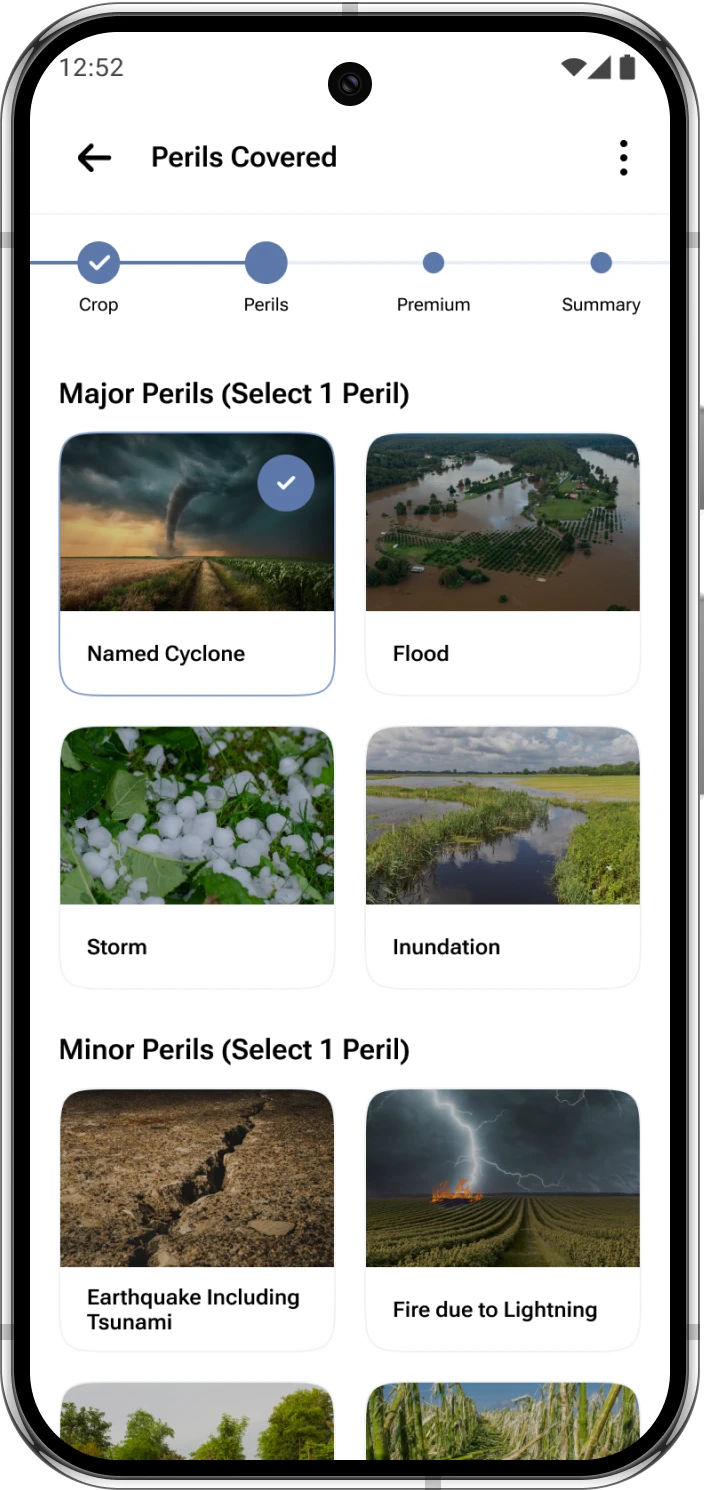

- Buy in easy steps

- Premium Starts at INR 499

- Protect 100+ Crops

- Quick & Easy Claims

User Data Agent: Personalizing the Farmer Experience

- User profiles

- Policy purchases

- Premium payment history

- Claims settlement status

Crop agent

When farmers have crop-related queries, they get routed to the Crop Agent. Initially, we explored several general-purpose agronomy models, but they lacked the specificity needed for region-specific crops and local farming practices.

We then integrated Dhenu2-In-Llama3.1-8B-Instruct, a specialised model trained by KissanAI on extensive crop data. This dramatically improved the relevance and depth of our responses enabling questions about disease management, pest control, and best practices, receiving tailored advice based on their specific crops and region.

Climate agent

The Climate Agent offers real-time weather updates, climate trend analysis, and predictive insights to help farmers make informed decisions about sowing, irrigation, and harvesting. Our initial approach used third-party APIs for weather data, but we encountered inconsistencies. By incorporating multiple sources and fine-tuning predictive models, we enhanced the accuracy of climate forecasts.

To further improve localisation, we trained the Climate Agent using in-house climate data from recent years, specifically focused on Indian agricultural regions. This data-driven approach allows KshemaGPT to offer highly localised weather-based recommendations. Farmers receive tailored insights on monsoon patterns, drought predictions, and optimal sowing windows based on actual historical trends, making it far more reliable than generic weather services.

Translation Agent: Multilingual Support for Rural India:

India’s linguistic diversity demands local language support. KshemaGPT uses:

IndicTrans2 by AI4Bharat

Supports English, Hindi, Telugu, and Tamil

Delivers farmer-friendly responses in native languages

Voice Agent: Speech-to-Text for Farmer Accessibility

Typing isn’t always practical for farmers, our main category of consumers. Many aren’t familiar with keyboards or comfortable expressing themselves in written form. Speaking, on the other hand, comes naturally. To support this, we implemented a speech-to-text pipeline using the open-source whisper-large-v3 model from OpenAI.

Typing isn’t practical for many farmers. KshemaGPT uses:

Whisper-large-v3 by OpenAI

Detects spoken language

Transcribes speech to English

Responds in the user’s preferred language

This voice-first model makes KshemaGPT intuitive and accessible.

Tracing, Observability and Prompt Management

Building LLM-based applications comes with its own set of challenges—complex control flows, non-deterministic outputs, and diverse user intent. Debugging and evaluating these systems, especially at scale, can be extremely difficult without proper tools.

We use Langfuse to bring observability into the heart of KshemaGPT. Langfuse allows us to:

- Trace and debug complex chains of model calls and tool invocations

- Centrally manage and version prompts, allowing quick experimentation and tuning

- Log and review user feedback for each interaction, helping us iteratively improve system behavior

Langfuse has become an essential part of our development and evaluation workflow, giving us the confidence to experiment boldly while staying grounded in real-world performance.

Learnings

Building KshemaGPT has been a process filled with experimentation, iteration, constant learning, and evolution. Here are some key insights that emerged along the way:

- Importance of routing logic: Our initial flat tool-calling setup didn’t scale. Hierarchical routing with a dedicated Router Agent drastically improved efficiency and accuracy.

- Separation or sorting: We learned that lumping all documents into a single collection was a mistake. Sorting by policy type reduced retrieval errors and improved relevance.

- Parsing is hard but OCR is harder: Handling PDFs with messy layouts and legibility of scanned pages required us to dig deep into OCR. GOT-OCR-2.0 was the breakthrough.

- Chunking isn’t just chopping: Chunking strategies must align with the document structure and output format. Chunking LaTeX by section using GOT-OCR-2.0 brought structure and meaning to our retrieval pipeline.

- Start simple before scaling up: Chroma DB was great for prototyping, but Milvus was necessary for production. The same goes for embedding and reranking models.

- Don’t ignore observability: Debugging multi-agent LLMs is hard. Langfuse helped us trace queries, manage prompts, and capture user feedback which played a huge role in iterative improvement.

- Language and voice are key: Farmers prefer speaking over typing. Whisper’s speech-to-text and IndicTrans2’s multilingual translation let us serve users in their native language.

- Real modularity enables iteration: Treating each capability as an agent lets us upgrade individual components like swapping parsers or models without breaking the system.

These learnings now inform every decision we make going forward with KshemaGPT.

Conclusion

By leveraging open-source models and frameworks, we’ve built an extensible, multilingual, and modular platform that can evolve. The architecture has been carefully designed to ensure that each part——is independently robust yet works seamlessly as part of a larger whole.

What started as a simple experiment has matured into a full-scale production system. While there’s still a long way to go, the progress we have made so far affirms the potential of AI in transforming rural livelihoods. With continued learning, iteration, and collaboration, we hope to further bridge the gap between technological advancement and on-ground impact.

KshemaGPT is not the destination—it’s a solid foundation for what comes next.